Install apache spark standalone

- INSTALL APACHE SPARK STANDALONE MAC OS

- INSTALL APACHE SPARK STANDALONE INSTALL

- INSTALL APACHE SPARK STANDALONE CODE

INSTALL APACHE SPARK STANDALONE MAC OS

In the installation steps for Linux and Mac OS X, I will use pre-built releases of Spark.

INSTALL APACHE SPARK STANDALONE INSTALL

Feel free to choose the platform that is most relevant to you to install Spark on. For now, we use a pre-built distribution which already contains a common set of Hadoop dependencies. In this section I will cover deploying Spark in Standalone mode on a single machine using various platforms.

We could build it from the original source code, or download a distribution configured for different versions of Apache Hadoop. There are several options available for installing Spark. Can be overridden via -w path CLI argument. Installing Apache Spark Downloading Spark.

INSTALL APACHE SPARK STANDALONE CODE

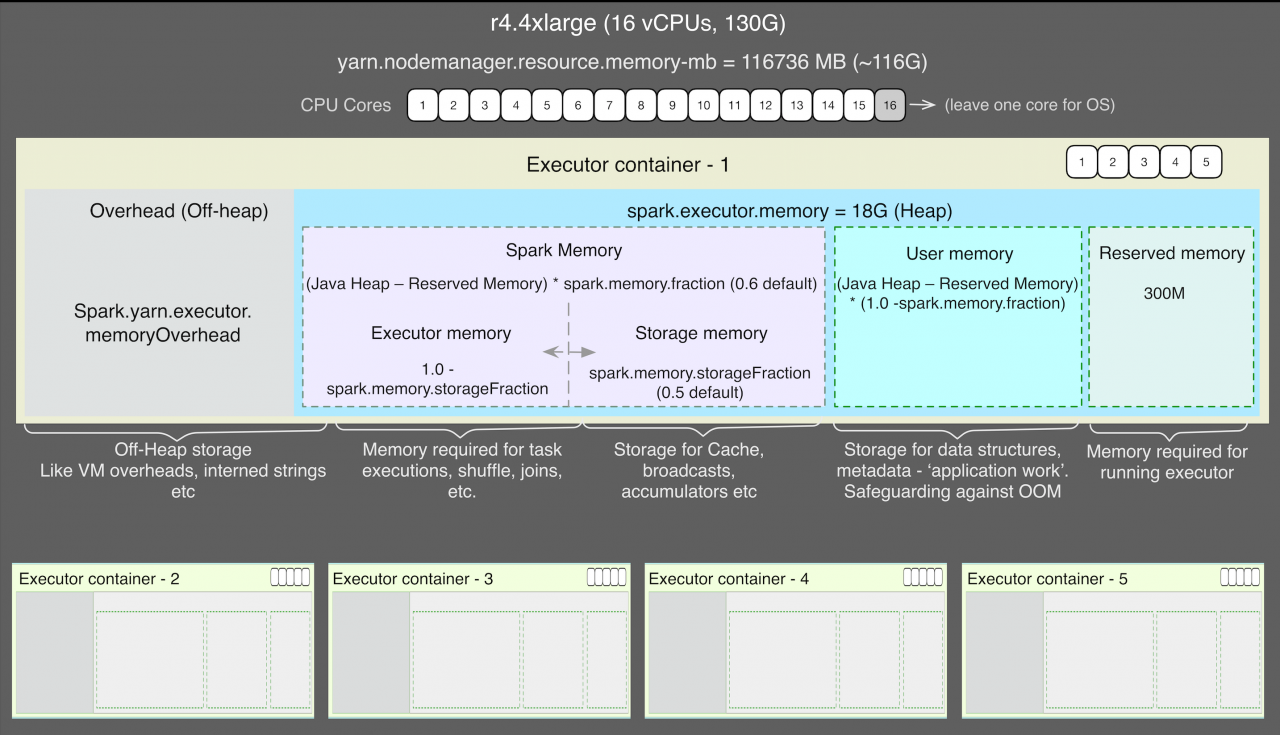

Spark can be installed either by downloading and compiling the source code as per the environment, or simply using the pre-built binaries. You have to install Apache Spark on one node only. As mentioned above, Spark can be deployed using YARN or Mesos as cluster manger, but it also allows a simple standalone deployment. Select Ubuntu then Get and Launch to install the Ubuntu terminal on Windows (if the install hangs, you may. Installing Apache Spark Standalone-Cluster in Windows Sachin Gupta, 1, 15 mins, big data, machine learning, apache, spark, overview, noteables, setup Here I will try to elaborate on simple guide to install Apache Spark on Windows ( Without HDFS ) and link it to local standalong Hadoop Cluster. While using YARN it is not necessary to install Spark on all three nodes.

Download Apache Spark-3.0.1 with hadoop2. Go to Start Control Panel Turn Windows features on or off. We have installed all the dependencies and network configuration to get Spark run error free. Depending on which one you need to start you may pass either master or worker command to this container. Apache Spark Windows Subsystem for Linux (WSL) Install. Standalone mode supports to container roles master and worker. Simplest way to deploy Spark on a private cluster. It is installed with MySQL to allow multiple users to start spark-shell or. Note that the default configuration will expose ports 80 correspondingly for the master and the worker containers. Step-by-Step Tutorial for Apache Spark Installation Standalone Deploy Mode. Apache Spark standalone is installed on erdos, and it does not include Hadoop.